When Google released Gemma 4 on April 2, the company made a pointed argument: you should not need a cloud subscription to run a world-class AI model. With four model sizes ranging from an effective 2B to a dense 31B, native vision and audio, and a commercially permissive Apache 2.0 license, Gemma 4 is the most direct challenge yet to the closed-API model of AI deployment.

What Gemma 4 Actually Does

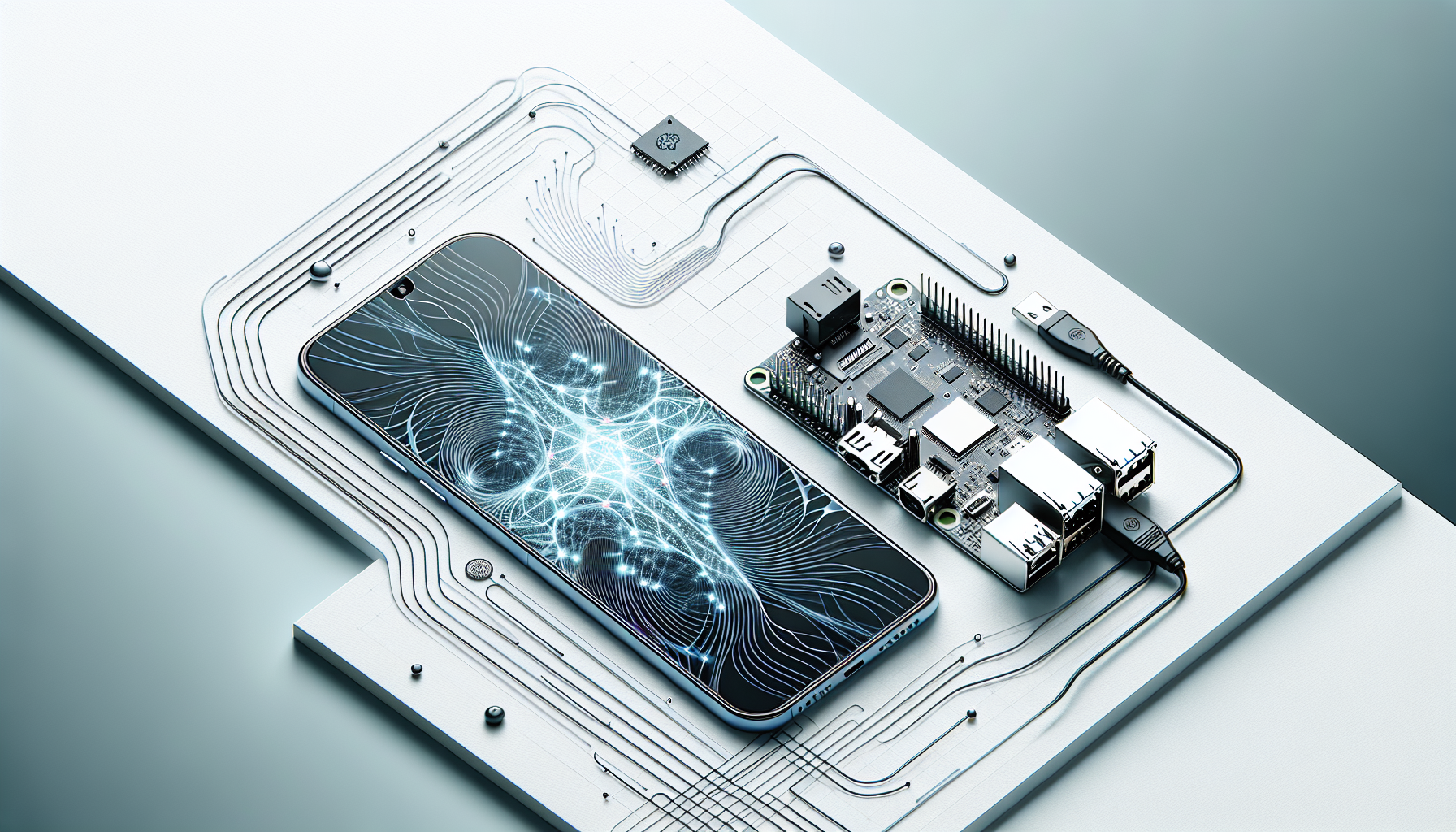

The headline capability is multimodal reasoning at the edge. The two smallest variants — E2B and E4B — use a mixture-of-experts architecture that activates only a 2B or 4B parameter footprint during inference, keeping RAM and power consumption low enough to run fully offline on a phone, a Raspberry Pi, or an NVIDIA Jetson Orin Nano. Google calls this “near-zero latency” inference, and independent benchmarks suggest that claim holds up on mid-range Android hardware.

For developers who need more headroom, the 26B MoE and 31B Dense variants target server and workstation deployments. The 31B Dense scores 85.2% on MMLU Pro and 89.2% on AIME 2026 — placing it third on the Arena AI leaderboard at launch, ahead of several models that cost money per token to call. Context windows reach 256K tokens across the family, with support for over 140 languages.

The agentic story is equally significant. Google built Gemma 4 specifically for tool-use and multi-step reasoning workflows. The models ship with structured output formatting, function-calling schemas, and optimized latency profiles for orchestration loops — the kind of plumbing that makes the difference between a demo and a production agent.

The Apache 2.0 Question

Previous Gemma releases used a custom license that restricted redistribution and commercial deployment in ways that frustrated enterprise legal teams. Gemma 4’s switch to Apache 2.0 removes those ambiguities. Companies can now fine-tune, redistribute, and embed Gemma 4 in commercial products without additional agreements.

That licensing change may matter more than the benchmark numbers. Enterprises running sensitive workloads — healthcare records, legal documents, financial data — have been reluctant to route information through third-party APIs regardless of capability. An Apache-licensed model that matches closed-API performance on key benchmarks eliminates the core objection.

Google Cloud is offering Gemma 4 through Vertex AI for teams that want managed infrastructure, but the models are also available on Hugging Face and directly through Google AI for Developers, with no API key required for local inference.

Android Integration and the Edge Roadmap

The Android Developers Blog announced an AICore Developer Preview that integrates Gemma 4 directly into the Android operating system. On supported devices, AICore exposes a system-level inference API, meaning applications can call Gemma 4 without bundling model weights into their APK. For the average flagship phone in 2026, that translates to on-device AI features that consume roughly the same disk space as a podcast app.

Google’s positioning here is explicit: Gemma 4 is the foundation for a future where intelligent behavior is a default property of the operating system, not a network call. Whether the developer ecosystem follows that lead depends on whether the models stay competitive as the rest of the field advances — but the April 2 release is a credible opening move.

The broader implication is a market structure shift. If capable open models become as easy to deploy as SQLite, the pricing power of cloud AI inference providers comes under pressure. The next six months will show whether enterprises update their AI budgets accordingly.