What the problem was before transformers

Before 2017, language models processed text one word at a time, like reading a sentence left to right and keeping running notes. The further back a word was, the harder it was to remember. Long-range dependencies — the fact that “the bank” in sentence one matters to “the deposit” in sentence seven — got lost.

How attention changes everything

The 2017 paper Attention Is All You Need introduced a different approach. Instead of reading sequentially, the model looks at every word in relation to every other word simultaneously. For each word, it computes an attention score against every other word, then uses those scores to build a weighted summary of the whole sequence.

The result: the model can link “bank” to “deposit” even if twenty words separate them.

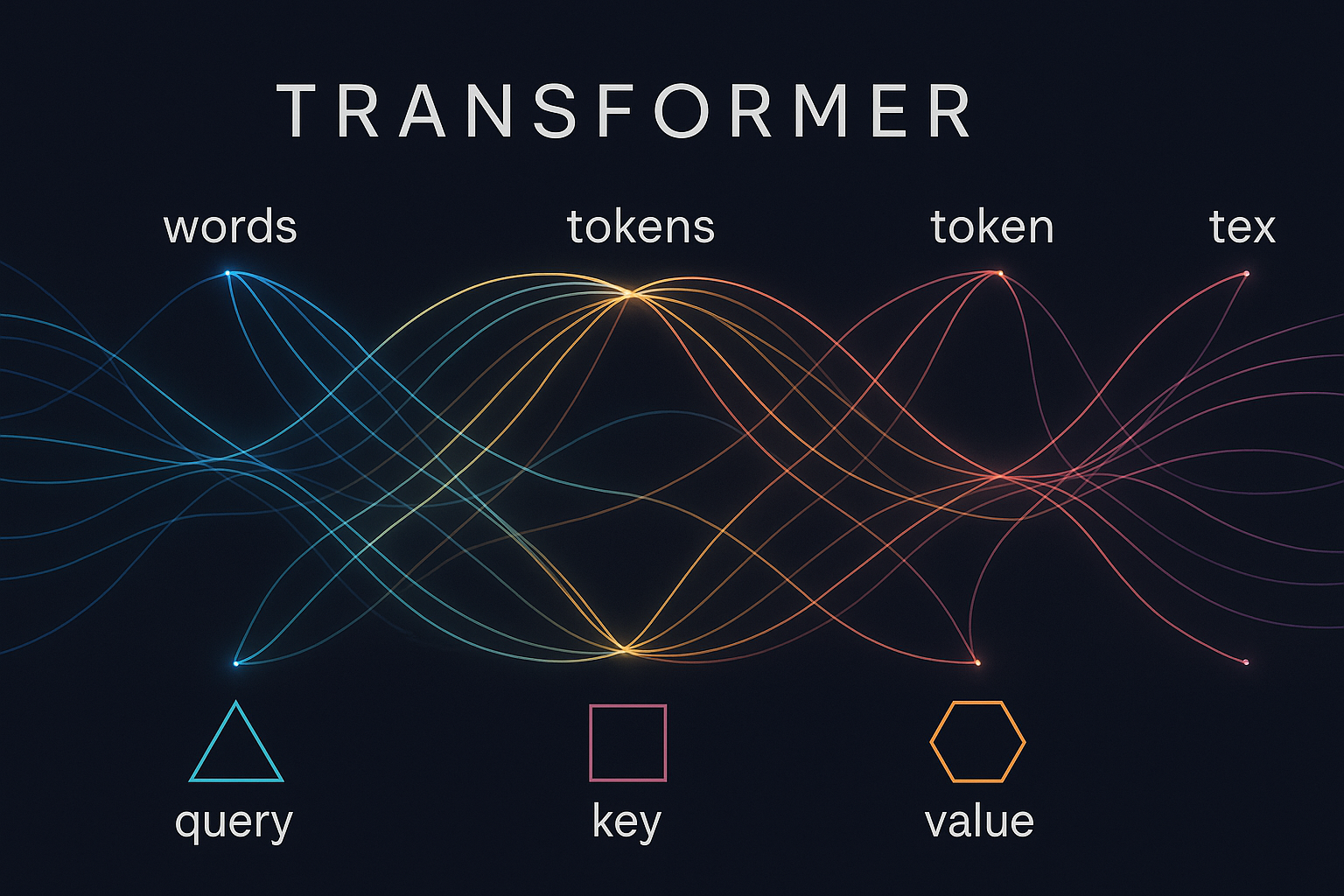

The three components that make it work

Each attention head uses three matrices — Query, Key, and Value — derived from the input. The Query asks “what am I looking for?”, the Key says “what do I contain?”, and the Value carries the actual content. The dot product of Query and Key gives the attention weight; the weighted sum of Values gives the output.

In practice, modern models run many attention heads in parallel (multi-head attention), each potentially learning different relationships — syntax, coreference, topic shifts.

Why scale matters

Transformers are data-hungry but parallelisable. Because every token attends to every other token simultaneously, training can be distributed across thousands of GPU cores efficiently. This is the core reason GPT-4, Gemini, and Claude could be trained on internet-scale corpora in weeks rather than decades.

What this means for AI you use today

Every time you ask a chatbot a question, a transformer reads your prompt and generates each output token by attending over the full context. The model does not “remember” previous conversations unless they are placed back into the context window — there is no separate memory system. The context window size (currently up to 1–2 million tokens in frontier models) determines how much prior conversation the model can attend to.

The limits

Attention is quadratic in sequence length: doubling the context window quadruples the compute. This is why context windows are not infinite, and why techniques like sparse attention, flash attention, and mixture-of-experts architectures exist — they are engineering responses to the quadratic wall.

Discussion

Sign in to join the discussion.

No comments yet. Be the first to share your thoughts.